Understand display techniques in augmented reality

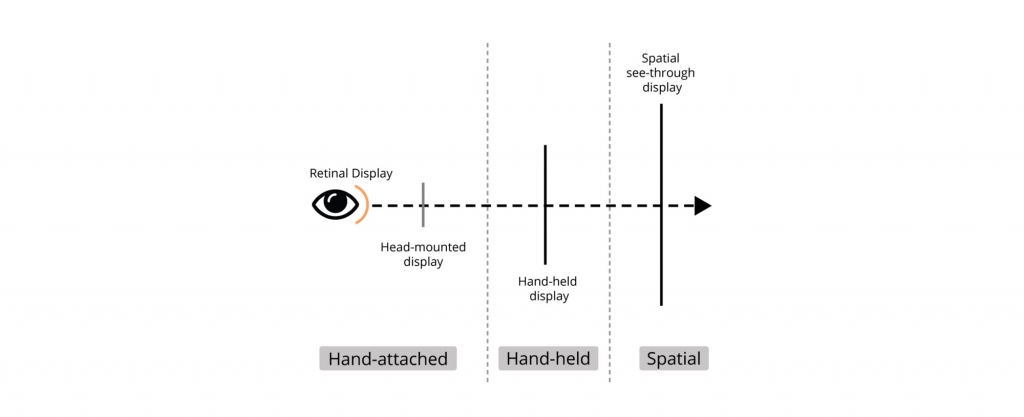

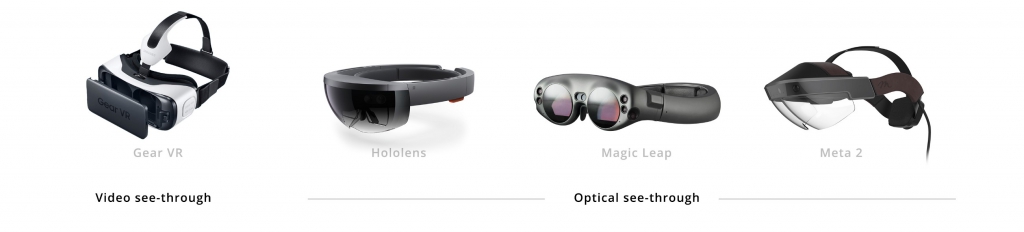

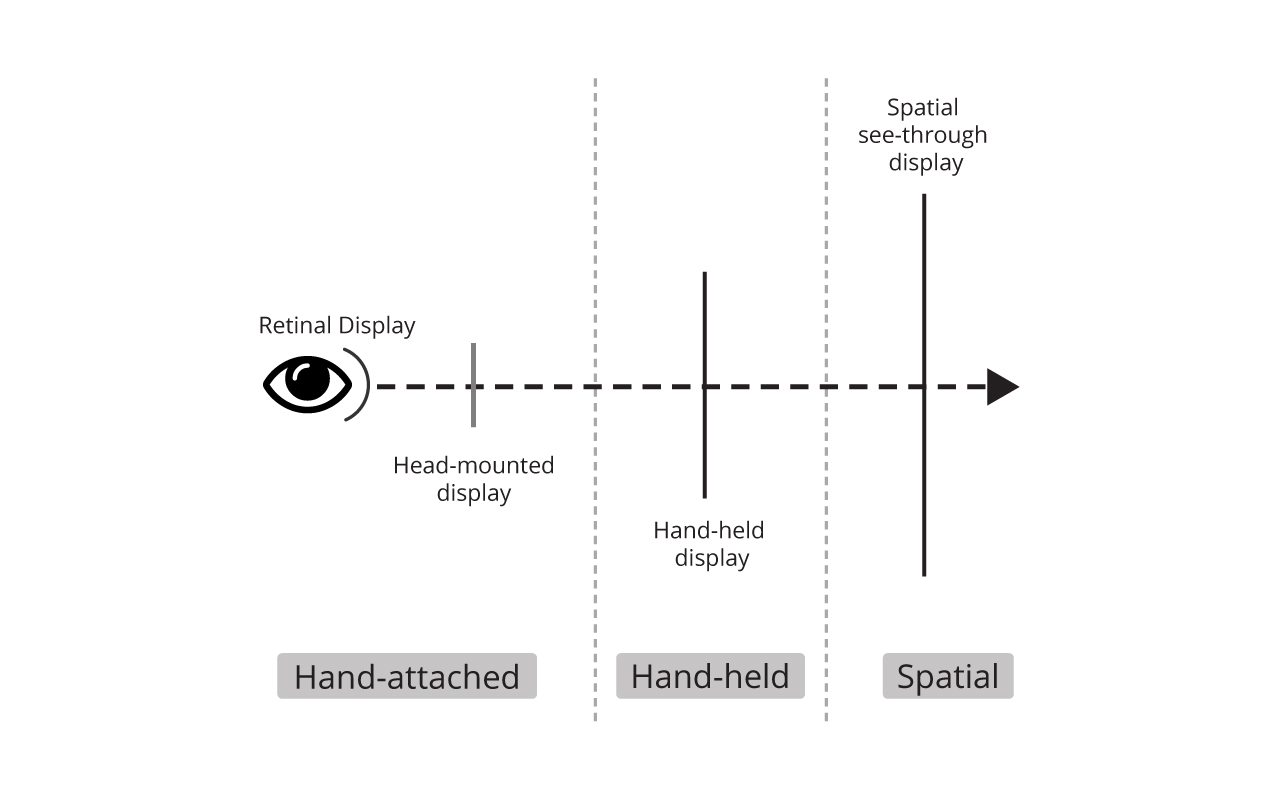

There are three basic display techniques for showing visuals in augmented reality which depend on the proximity of display mediums (see figure 1). Handheld augmented reality is a common method that uses smartphones and tablets to show augmented reality content, it gained popularity after the release of gaming applications like Pokémon Go and Ingress. Another is using headsets that are classified as optical see-through (OST) and video see-through (VST). OSTs are often termed as true AR at the moment (e.g. Hololens, Google Glass). New technologies that are in development include spatial see-through displays that project content in 3D free space using plasma in the air and retinal displays which projects directly on users’ retina. To limit the scope of these articles I will focus on headset-based techniques that pose more typographic challenges as compared to any other mediums already in use, like smartphones. (The handheld smartphone-based AR doesn’t bring any major shift since the users see the screen in a conventional way by holding it in front of them.)

Headset-based display techniques in augmented reality

The headset-based augmented reality is bringing a whole new set of challenges both in terms of typography and typefaces. In a lot of cases, the text information acts as a supplement to the main point of focus, for example: showing location-based information while driving, where the road is the main focus. In such cases, the role of text might seem secondary but it would be more complex to deliver good cognitive performance. The amount of time to process the information is very short and legibility is one key factor that can enhance the reading experience. Getting back to the main topic of discussion, the headsets are divided into two categories which have their own mechanism to display the text which directly affects how the users perceive them. The same typeface can appear differently based on the type of headset.

Video see-through

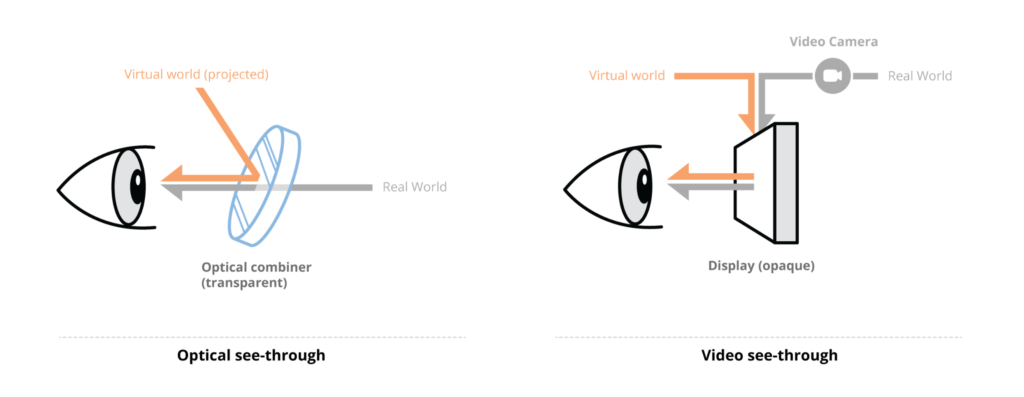

Video see-through is one of the affordable techniques to deliver AR experiences. In VST, a camera captures a digital video image of the real world and transfers it to the graphics processor in real-time. Then the graphics processor combines the video image feed with computer-generated images (virtual content) and displays it on the screen (see figure 2: left). Since the video processing is done before showing the content to the user, it is possible to control the brightness and contrast of both real-world and virtual elements for a seamless experience. It also has a better registration through tracking of head movement. However, there are drawbacks which include low resolution of reality (screens don’t match the human eye resolution), a limited field of view (which is possible to increase but is expensive) and eye parallax (eye-offset) due to the camera’s position which is usually at a distance from the viewer’s exact eye location. The VSTs can use smartphones like in Samsung Gear VR where the phone is used as a display places a few inches away from users’ eyes which is totally different from experiencing AR in handheld smartphones.

Optical see-through

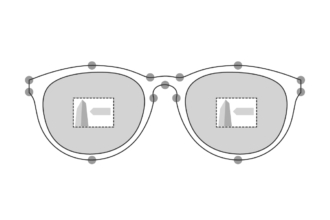

Optical see-through displays operate using optical elements (like half-silvered mirrors) that are half-transmissive and half reflective to combine real-world and virtual elements. The mirror allows a sufficient amount of light from the real world to pass through making it possible to see the surroundings directly. Simultaneously, computer-generated images are projected on the mirror through a display component placed overhead or on the side, which creates a perception of the combined world (see figure 2: right). OSTs show the world in real resolution free from parallax (caused by the offset of camera position in relation to the viewer’s eye). These are safer to operate since the user can see even if the power fails, making them an ideal option for military and medical purposes. However, the use of mirrors and lenses reduces the brightness and contrast of both virtual and real-world perception.

Time to learn from our mistakes

The interface designers will have to be more careful, driving away from trends and using research to make well-informed decisions. One such clear case of trend-based is the extensive use of square grotesque typefaces in most of the dashboard UI because it became popular with movie depictions of the future. MIT Age lab’s research 1 reveals a lot of factors that are usually ignored by designers, increasing the cognitive load and thereby increasing the probable threat to users’ life. Design for AR gives clear ground to design things from scratch to learn from our mistakes from the past and design for better future interfaces.

Further Reading:/ References

- Schmalstieg D. and Hollerer T., Augmented Reality: Principles and Practice, Addison- Wesley Professional, 2016

- Jonathan Dobres, Nadine Chahine, Bryan Reimer, Bruce Mehler, & Joseph Coughlin, “Revealing Differences in Legibility Between Typefaces Using Psychophysical Techniques: Implications for Glance Time and Cognitive Processing”, http://agelab.mit.edu/files/Typeface/MT-MIT-Latin-Word_Recognition-White-Paper-2014-06-24.pdf (last accessed on 6 October 2018).

Leave a Reply