If you are new to AR displays I would recommend you to read these articles to know more about AR displays: Understanding display techniques in Augmented Reality and Variables that affect the experience in AR

This has directed researchers to find alternative solutions to the underlying problem and I’m going to share some of them in this article. If you are not a fan of long-form reading then you can quickly skim through and watch the videos.

Key concepts to understand:

Accommodation

Accommodation is the tension of the muscles that change the focal length of the lens of the eye (by changing the shape of the elastic lens). This helps our eyes to focus on objects at different distances.

Vergence

Vergence is the process in which the eyes move in equal and opposite directions of one another in order to fixate an object.

Foveated Displays

Foveation in our eyes happens when we focus on certain objects, the object in focus appears to be crisp and clear while the surroundings and background become blurred. In foveated displays, a high-resolution inset is presented to the fovea (part of the retina that provides the clearest vision) and a lower resolution is presented to the rest of the retina which requires eye tracking to figure out the position of the foveal region.

Maxwellian displays

These displays do not require eye-tracking or dynamic lenses (which shift position) to eliminate the accommodation depth cue for always in-focus images and can deliver 3D and depth perception solely from vergence cue (is the simultaneous movement of both eyes in opposite directions to obtain or maintain single binocular vision) through always-in-focus stereoscopic images without cue conflict. This enables the optics to be very compact and with little computational overhead to render images.

Multi-focal Rendering

In headsets, flat screens are used to simulate the depth of field which requires the user to stare at the screen inches away from their eyes. However, the eyes focus on a point in the simulated world that is much further away. It results in conflicting signals about the focus and eye alignment known as the accommodation-vergence conflict which causes nausea, dizziness, eyestrain, and inaccurate depth perception. (Read the detailed explanation here)

To trigger the accommodation-related visual cues (which reduces the possibility of nausea), the multi-focal rendering system displays objects with levels of blur appropriate for their depth relative to the viewer. In the case of displays with a fixed (or set of fixed) optical distances, this blur is introduced computationally using different blurring algorithms.

What’s happening in the industry?

Half Dome (Oculus)

Half Dome headset is capable of producing life-like VR experiences by adjusting the displays to match your eye movements. It is powered by eye-tracking cameras, wide-field-of-view optics, and independently focused displays. What this means is a crisp image quality wherever you are looking in the VR scene.

However, this hardware capability is not something new as it goes back to the first Half Dome Prototype was introduced in May 2018 (during F8) but the hardware innovation at that time was limited in terms of producing a natural way of seeing objects since everything stays in focus. So to complement that Facebook Reality Lab (FRL) created a new AI-powered rendering system called DeepFocus which they are calling realistic retinal blur (announced in December 2018). It mimics how we see objects in our daily life, producing real-time blur, just like our eyes blur things around the things we are focusing on. This is how our brain perceives the three-dimensional world by creating understanding depths (distance) of objects in our view.

This surely does sound easy but achieving the functionality of the DeepFocus system is no easy task since everything has to be done in real-time with no delays. Otherwise, remember your head spinning the last you had put on your VR headset.

“Whether, “This is about all-day immersion.” Not sure about how easy that would be but it surely sounds enticing and reminds of dystopian world of Ready Player One.

-Douglas Lanman (FRL’s Director of Display Systems Research).

The most fascinating part for me of the whole project: is the approach of leveraging the resources in hand to solve problems instead of waiting for the hardware manufacturers to increase the capabilities. Douglas Lanman, FRL’s Director of Display Systems Research didn’t wait for the hardware manufacturers (especially processors) to come up with something new that can give enough processing power to handle these tasks. He decided to go to the software path and developed AI system to solve the issue.

Research Paper

Accommodation-Free Head Mounted Display (Cambridge University | Huawei)

Cambridge University’s Centre for Advanced Photonics and Electronics with support from Huawei revealed a headset (based on Maxwellian display) capable of reducing (eliminating) common negative effects in HMDs like eye strain and nausea while using these headsets.

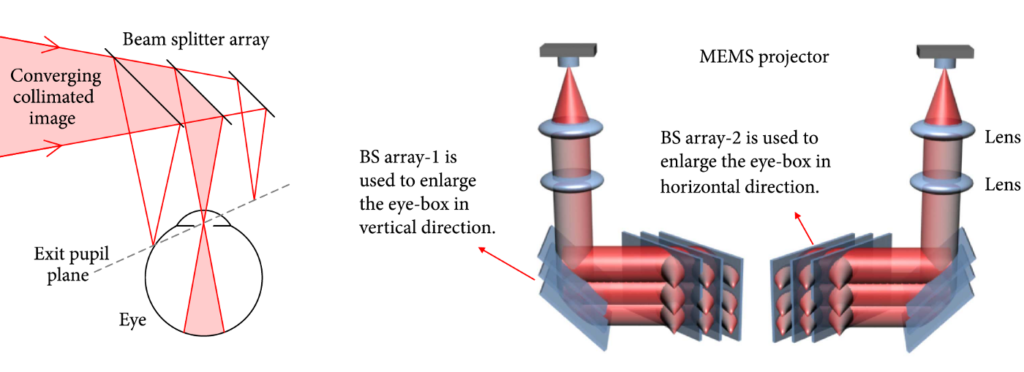

The size of the eye box in their HMD is significantly larger and has a field of view of 36º designed for a comfortable viewing experience. It uses pixel beam scanning to ensure images stay in focus irrespective of the distance that the user is fixating on. This was made possible by using partially reflective beam splitters that form an additional exit pupil (which is a virtual aperture that allows rays to pass through) through which narrow pixel beams that travel parallel to each other without dispersing in different directions thereby forming a high-quality image on the retina.

“Our research offers up a wearable AR experience that rivals the market leaders thanks to its comfortable 3-D viewing which causes no nausea or eyestrain to the user. It can deliver high quality clear images directly on the retina, even if the user is wearing glasses. This can help the user to see displayed real world and virtual objects clearly in an immersive environment, regardless of the quality of the user’s vision.”

-Professor Daping Chu, director of the Centre for Photonic Devices and Sensors and Director of CAPE

The headset is capable of producing high brightness and can be used in a wide variety of indoor and outdoor scenarios. The next steps in research are to explore the application of the technology in CAD (computer-aided design) development, hospitality, data manipulation, outdoor sport, defence applications and construction. And improving the form factor of the headset (bulky size) to more usable glasses based compact design.

Research Paper

Foveated AR: Dynamically-Foveated Augmented Reality Display (Nvidia)

During SIGGRAPH, Los Angles Nvidia showcased a novel display technology that has surpassed the design constraints of existing display techniques through a combination of mechanical and optical elements with GPU computation for Machine learning and rendering.

The headset has a combination of two displays per eye: a high resolution with a small field of view used for the portion where the user is fixated thereby producing a sharp image on the retina; and a low-resolution display for peripheral vision. Which results in a better user experience with efficient use of power to render the graphics in view.

An infrared camera tracks the gaze of the which controls the movement of the foveal display units described above. The display supports accommodation cues by varying the focal depth of micro-display in the foveal region. This allows the display to function with a high field of view, resolution and has a reasonable impact on reducing the form factor. The prototypes are capable of supporting 30, 40, 60 cpd (60 cpd is the maximum resolution of the retina) foveal resolution at a net 85 x 78 field of view per eye.

Another key advance in the prototype is a bigger eye box and field of view that exceeds the optical invariant for the Maxwellian display by dynamic positioning of holographic optical elements. It is made possible by using motors that move the holographic display unit based on gaze tracking.

And it complements the mechanical components with a real-time rendering system using gaze tracking, foveated varifocal rendering and calibration across the geometric distortion, intensity and colour of different optical paths based on deep learning.

Research Paper

Matching Prescription & Visual Acuity based AR headsets (Nvidia)

In the pursuit to push boundaries of current AR headsets to a more compact form factor, Nvidia introduced its prescription AR glasses prototype which has set a milestone. And they have been recognized for that, as they had won Siggraph’s Best of Show Emerging Technology award (2019).

Currently, the race to sweep up the AR market is focussing on creating the best in a class headset. However, a huge chunk of users have been ignored, I’m talking about people who use prescription glasses (including me). Using AR/VR headsets for folks like me is an issue, either we don’t have an option to wear my spectacles and the ones which provide the flexibility feel bulky and are not ideal for prolonged usage.

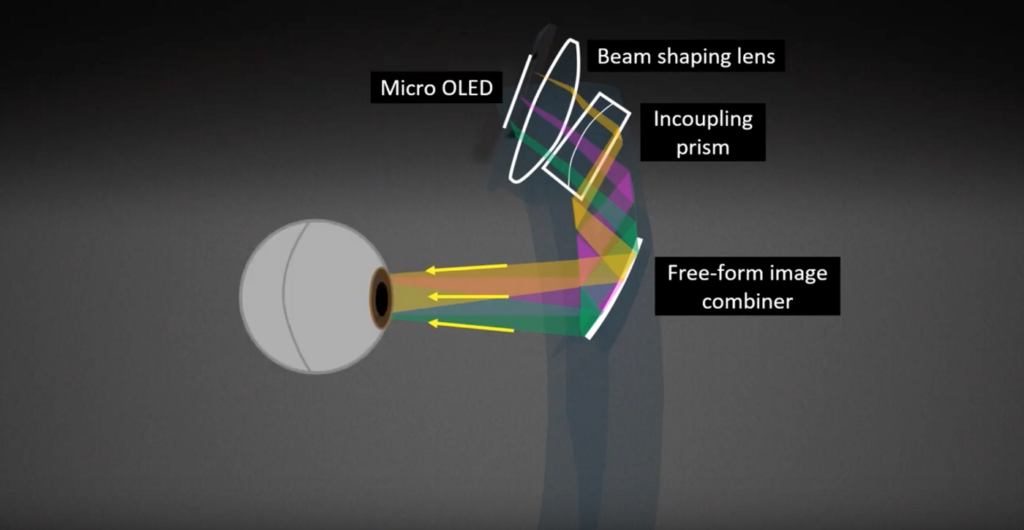

This is the area where Nvidia’s Prescription AR glasses are taking a reverse approach unlike the current trend of prescription glasses compatible headsets. Instead, they have incorporated a functional AR display into a prescription lens by combining it with a micro-OLED display. And the display is thinner and lighter than any exiting headset, and it has a wider field of view, according to Nvidia.

The Prescription AR display consists of a beam shaping lens and an Incoupling prism to transfer an image generated on micro OLED to the eye via total internal reflection. The free form combiner corrects the viewers’ vision while delivering an Augmented image located at a fixed focal depth.

Research Paper

Conclusion

Out of all the headsets discussed in this article, only Half Dome by Oculus seems to be heading towards mass production. The other three prototypes seem to be for research purposes only, we’ll have to wait for other researchers to test the claims made and validate their usefulness. But let’s be optimistic and hope that companies will license or build upon these new alternatives to reduce friction in terms of experience and the form factor.